This is part two of the non-technical explanation of ChatGPT series, please read that first. It's also helpful to read the non-technical explanation of AI so you have some background on how deep learning is used here.

Creating CatGPT

Convinced that Markov's method is a good path forward, you decide to create the frequency table with 4 words of context for the alien cat language so you can create high quality sentences. You roll up your sleeves and get to work.

A week goes by and you notice you've barely made a dent - you've filled up several notebooks with frequency counts, and you still have so very many word combinations to go through. What's going on?

How much work is necessary to create the frequency table? If we were using a single word as our context window and wanted to have the vocabulary of an average native English speaker (30000 words), we'd need 30000 rows, one for each word in the English language.

If we use two words of context we'd potentially be looking at the number of two word permutations of those 30k words. That's about 900 million rows. With three words of context we could have 27 trillion rows. That's a lot of rows. In practice the number of permutations is significantly smaller because many word permutations don't occur in English, but we'd still get to very large numbers very quickly.

This is an unfortunate reality when dealing with combinations and permutations - increasing the number of items you consider catastrophically increases the number of calculations you have to do or items you have to remember. The technical term is combinatorial explosion, which is a good description of how your head feels after all that counting.

This is not good. Four words of context is not very much - something as simple as our marathon example ( I just ran a marathon. I am very _____ ) would require more context. How do we deal with the explosion?

You ask Markov and he gets a sour look on his face - "Yeah, this is a a problem. I don't have a great solution."

Learning, Deeply

How do we deal with this explosion of calculation? Since we can't directly solve the problem maybe we can wave our hands and try the magic of Deep Learning.

Let's see if we can use our experience from finding cats here. That also seemed a very complex problem, and we solved that using layers of workers to focus on different aspects of the problem, and formed our final opinion based on the opinions of the layers of workers giving us information.

We have a bit of a problem though - in that case the boss was giving us the examples as well as the correct answers. She was also giving us rewards and punishments. We don't have that here - we just have a big book of alien cat communication and a desire to create a frequency table.

That's too bad - you have your slapping gloves ready to go, you were really hoping you could use the same technique as last time.

Eureka: We can Train

What is the actual problem we're trying solve with the frequency table? We are trying to predict the next word. Can we turn that into a problem with rewards and punishments?

Yes we can!

The problem we're trying to solve is: given 4 words of context, predict the next word. We tried to be clever and use a frequency table to solve this. Let's not be clever: just like the cat classification task, let's not define the methods and process for solving the problem - let's instead hand it off to our layers of workers and reward and punish them until they figure it out.

How do we turn our book of cat communication into a prediction task? Simple: grab any 4 words of context and hand them off to the group of workers and ask them to predict the next word. We know what the next word should be (it's just the word that followed those words in the big book of cat communication) - if they get it right we give them a reward, if they get it wrong we give them a slap.

This is great - we can open the book of alien cat communication on any page, pick 4 consecutive words, give that to the workers, and train them on what the next word should be. Now we have our training examples and our rewards and punishments.

You grab your slapping gloves and lots of rewards and call your layers of trusty workers and start the the training.

Deep Learning For Hard Problems

This is a great example of a situation where deep learning can be applied effectively:

- Traditional methods (in this case, creating the exact frequency table) don't work

- We have a lot of data

- We can create a large number of training examples

We have turned the task of creating a sentence into a predict-the-next-word task, and we have turned the book of cat communication into many examples that we can train on.

The architecture of the deep learning system that powers ChatGPT is quite a bit more complex than what we discussed in the non-technical explanation of AI post, but the core ideas are the same. I'll touch on some of the technical aspects in future posts, but for now think of the same multi-layer groups of workers where rewards / punishments are propogated back through the network to train it to produce the desired output.

CatGPT Works. Sort Of

After lots of training you and your colleagues are getting good at predicting the next word in the cat language, and you're enjoying the rewards. But you're a bit stuck - there's only so much you can do with 4 words of context. Your colleagues are asking for more and more words of context. You start to increase the context window from 4 words to 5, 6, and so on, and your responses start getting better. But the workers' task is also getting much more complex: as you give people more and more words their area of focus becomes too large - they have too many foreign words to look at at a time. You get to a point where each worker can't effectively focus on more words. Your training stalls and you're not getting as much reward as you want. Your colleagues start complaining about losing out on the rewards - the loss is too high!

Attention Please

Your context window has gotten too large and things are stalling. You start doing the same thing you did with the cat classifying task: you tell each worker to focus on a small window of words and give their opinion on that instead of looking at all the words in the context. It's going ok, but the window of words each worker looks at isn't enough to understand the whole context, and increasing each worker's window makes their individual task too difficult.

One day one of the workers, frustrated that she can't predict the next word, yells out "I think this word is an adverb. I can't predict the next word - I don't have a verb in the part of the context I'm looking at!"

One of the other workers looks up. "I have a verb in my part! I've been looking for an adverb!"

The two of them exchange information and both improve their predictions.

Why didn't you think of that? You should let the workers communicate about the parts they're looking into. You grab your English grammar book and start telling the workers they should call out the parts of speech they have.

The problem is cat language doesn't follow English grammar rules, so what you're asking for doesn't really make sense.

You remember your motto: don't solve the problem in a clever way, just provide rewards and punishments. You tell the workers: feel free to tell others the type of thing you're looking at and ask others for the information you need to predict the next word.

"What do you mean by type of thing you're looking at?" ask the workers.

"I have no idea", you respond. "Whatever helps you."

The workers are skeptical, but they decide to give it a try. They start telling others what they think is relevant in the group of words they're looking at and paying attention to what the others are saying. It's challenging - each worker has to decide what to to say about their piece and what to pay attention to from others - but they work out a system. "I think this has something to do with whiskers, and I'm looking for something about whether the cat's in a small or large space". "I have information about the cat being alone and I'm trying to see if they're scared or not". "My tail is low between my legs, does anyone have information about a threat?"

A lot of what they communicate isn't useful, so they don't get rewarded for it. The workers focus on the information that gives them the most rewards, and because the rewards come from the next-word prediciton being right the whole system starts producing internal information that leads to better predictions.

Your training has gotten to the point where you're barely losing any rewards. You want to make sure people aren't just memorizing the whole cat communication book, so you kept one part of the book secret during training, and you use that part to test how good your next-word prediction is. It's looking good.

You have successfully built a Large (Cat) Language Model.

Transformers and Attention

One of the key breakthroughs that enabled the creation of sophisticated language models was the invention of transformers, which is the technical term for what we called attention above. If you're interested in the details and examples I'd recommend the illustrated transfomer and visualizing attention, both do a great job of giving you the math and intuition.

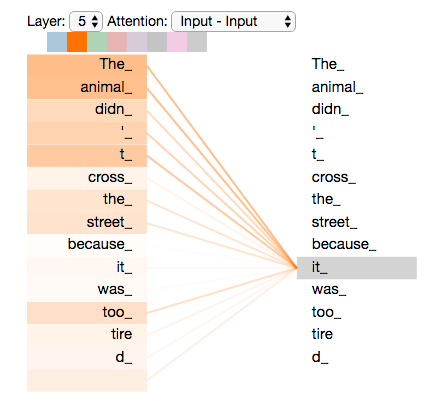

I'll steal one of the illustrated transfomer's images to give you a real world example of what attention looks like - the image shows what one of the "workers" is paying attention to in the sentence "The animal didn't cross the street because it was too tired":

The worker here is working on the word it, and the diagram shows it is paying the most attention to The and animal. That makes sense: in the above sentence it is referring to The animal, and the network has found a way allow that pronoun to be aware of its noun.

Cat Communication

You decide to try it out with the alien cats.

The cat representative is sitting across from you. It says something. You hand that over to your team of colleagues and they start their process of creating a response, one word at a time.

You give your response to the cat.

It gives you a surprised look, and raises its tail in a let's-be-friends gesture.

Back and forth, you try several exchanges, and it seems to be working - you're able to have a "conversation". You're not sure what you or the cat are saying, but you're conversing.

You notice some of your responses seem to upset the cat. You'd like to avoid that, so you start giving feedback to your team, increasing rewards for responses that make the cat happy and reducing rewards for responses that upset the cat. After a while your team learns to provide its responses in a way that makes the cat happy. You call this Cat-in-Loop-Training.

Human-in-the-Loop and Fine Tuning

ChatGPT is the fastest growing application in history - it went from zero to 1 million users in 5 days and to 100 million users in just two months.

But did you know that GPT-3, the base language model that was subsequently improved to power ChatGPT, was released in June 2020? What happend in those 2.5 years - why didn't GPT-3 capture our imagination the way ChatGPT did?

One of the main reasons was the "fine tuning" of the model based on human feedback to make it conversational and helpful.

The base language model, GPT-3, was trained on a very large amount of text from very many sources. This taught the system to be able to create cohesive sentences and paragraphs. Think of this as a computer that has learned to speak.

The human-in-the-loop fine tuning took this base system and further trained it to have chat-like conversations: the sentences created by the system were no longer just judged on how cohesive and valid they were, but also on how well they mimicked a helpful conversation.

ChatGPT is polite and helpful because it was trained to be that way - the language model itself is capable of many forms of communication, including being rude and unhelpful, since the very large amount of text it was trained on included rude and unhelpful conversations. ChatGPT was specifically trained to be a helpful chatbot, as opposed to a sarcastic layabout.

This idea of taking a base language model and further training it ("fine tuning" it) for your specific needs is very powerful: instead of having to create a complete model from scratch, you can take existing models off the shelf and tune them to do what you want.

(Another significant reason ChatGPT was so successful while GPT-3 was ignored was its easy to use and easy to understand user interface, but we'll conveniently ignore that here)

CatGPT

Congratulations, you have saved humanity, communicated with aliens, and perhaps understood a bit more about large language models and ChatGPT.

Further Reading

If you're interested in this topic you might also enjoy the other posts in the Non-Technical Explainer series as well.